Why Your Brand Must Move Beyond Visual Marketing

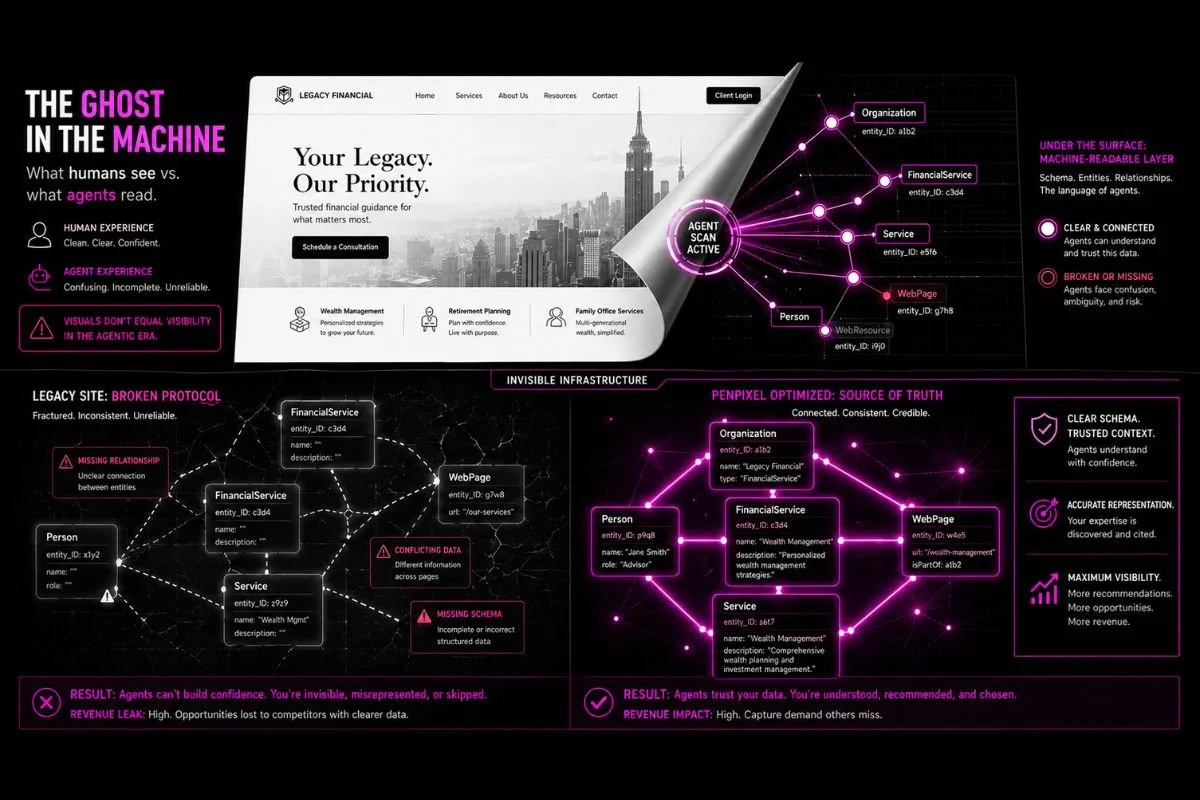

Legacy marketing models prioritize human search over machine-readable verification, creating a critical “authority gap” as search engines transition to task engines. We explain how to transition your digital estate into a "Source of Truth" that AI agents can trust, verify, and prioritize within the emerging autonomous revenue layer.

A Technical Roadmap

Understand the multi-step "Trust and Retrieval" framework AI uses to verify your brand’s E-E-A-T.

Operational Security

Learn why source verification serves as a "safety lock" for agentic systems and how to prevent its bypass.

Resource Realignment

A strategy to shift resources from low-value top-of-funnel content to high-authority, lower-funnel signals.

Competitive Advantage

Insights into transforming your brand from a passive visual destination into a verifiable protocol endpoint.

A recent client discussion highlighted a common, desperate misunderstanding of the current state of discovery.

“We want to know our Share of Voice (SOV) in AI-generated answers,” they told me.

They were using specialized tools to map hundreds of prompt variations, trying to guess every way a human might ask about their services.

This is still legacy thinking, just with a new tool.

In a country of 350 million people, you cannot out-write infinite human variability. More importantly, AI models and agents are evolving faster than marketing teams can keep up with. These systems don’t need you to explain "what is" or "who is"—they already possess that foundational data.

You must stop chasing prompts and start proving you are a "Source of Truth" that agents can cite and use.

AI verifies your entity through rigorous E-E-A-T benchmarks across the entire digital ecosystem. If you remain stuck in top-of-funnel informational content, you are functionally invisible to the systems now gatekeeping your revenue.

How Do LLMs Verify Sources Before Generating Answers?

AI systems verify sources through a multi-step "Trust and Retrieval" framework:

Retrieval Eligibility Check: The AI verifies if it can technically "find and parse" your information. If the content lacks a clear structure or schema markup, the AI cannot trust it for citation purposes.

External Validation: LLMs cross-reference your website's claims with high-trust industry. publications, major blogs, and reputable organizations. They verify authority by looking for consensus among third-party sources rather than relying on your internal marketing.

Entity Mapping: The system checks if your brand is recognized in established databases like Google’s Knowledge Graph, LinkedIn, or Wikidata. This confirms your brand is a real, validated entity.

Topical Depth Analysis: The AI evaluates your "Semantic Authority" to determine if you have comprehensive coverage of a niche. It verifies whether you are a primary expert or if your content is "thin" and scattered.

Narrative Consistency: The system verifies "brand signal stability." If your messaging, terminology, and brand description are inconsistent across different platforms (e.g., website vs. LinkedIn), the AI’s confidence in your data decreases.

Freshness Verification: Models prioritize "active" signals. They verify when your high-impact pages were last updated, typically favoring data reinforced within the last 90 days to ensure accuracy.

Why Is Source Verification Important For Agentic AI?

For an agent to act, its logic must be grounded in validated evidence. Without rigorous verification of your entity, the agent risks operational failure or security vulnerabilities.

As these systems evolve from generating text to executing autonomous tasks—such as processing transactions, managing workflows, or making recommendations—they require more than just data. They require a high Citable Authority Score.

Source verification is the "safety lock" for AI agents. When an AI system "fans out" to verify sources, it isn't just looking for information; it is looking for a Source of Truth that meets rigorous benchmarks for Experience, Expertise, Authoritativeness, and Trustworthiness (EEAT). The agent isn't simply verifying your data—it is verifying the EEAT of the entity behind that data.

If your brand cannot prove its authority through these signals, the agent will bypass you entirely in favor of competitors who provide the clear, verifiable breadcrumbs it needs to follow.

How agentic AI systems work

Agentic AI systems move beyond generating text to performing autonomous actions. Source verification is critical for these systems for the following reasons:

Autonomous Decision Integrity: Unlike standard LLMs that only summarize, agents use data to execute tasks. Unverified or inaccurate sources lead to failed actions, incorrect purchases, or flawed workflows.

Tool-Use Reliability: Agents rely on structured data to interact with APIs and software. Verified, high-quality sources ensure that the agent understands the "rules" of the environment in which it operates.

Path Prioritization: Agents are programmed to follow the path of highest confidence. If your brand’s source signals are weak or unverified, the agent will bypass your site in favor of a competitor with higher "Citable Authority."

Security (Prompt Injection): Verification prevents "Indirect Prompt Injection," where an agent might follow malicious instructions hidden in unverified third-party content.

Reduced Hallucination in Execution: By grounding an agent in verified "Truth Sets," you ensure the autonomous steps it takes are based on fact rather than probabilistic guesswork.

Why Preparing for Agentic AI Is Important

The internet is progressing from a place where humans search for information to one where autonomous agents perform tasks on our behalf. Preparing your website and brand is critical because AI systems are rapidly taking over the internet's revenue layer.

Traditional organic click-through rates drop by up to 58% when AI overviews appear, but highly qualified AI-referred traffic converts at 42% higher rates.

With AI agents projected to orchestrate trillions in global spending, being invisible to them is a massive financial risk. If your brand signals are weak, the agent will bypass your site in favor of a competitor with higher "Citable Authority."

Are brands currently prepared for agentic AI?

The reality is that most digital estates are built exclusively for human eyes, relying on visual hierarchy and self-promotional copy.

However, AI agents do not perceive design; they require machine-readable protocols to interact. If an agent hits a traditional visual checkout form, it will abandon the cart.

As illustrated in our intro, we’re finding that the challenge today isn’t a lack of effort; it’s a misalignment of resources.

Marketing leaders, managing the heavy demands of immediate growth, are operating on revenue models designed for a human-centric search era. While teams are often high-performing, the metrics they chase, like human search volume and top-of-funnel keyword rankings, do not account for the rapid evolution of Agentic AI.

"Winning the verification"

AI systems do not blindly trust your website; they verify authority by looking for consensus among high-trust third-party sources. If your strategy remains focused on capturing human prompt variations, you risk missing the evolution of how commerce is actually conducted.

To survive this, brands must stop acting as passive visual destinations and transform into verifiable protocol endpoints.

The Solution: Transitioning to machine-readable authority

To align with the trajectory of agentic commerce, you must shift focus from visual destinations to verifiable entity signals:

Reallocate to the Lower Funnel: Move beyond "what is" content. Prioritize proprietary, expert-level data that defines your specific solution and satisfies E-E-A-T benchmarks. This involves understanding the underlying intent of your ICP.

Establish Entity Consensus: Ensure your brand’s EEAT is reflected across essential third-party databases, industry publications, and technical schema, making your website the “source of truth” for your proprietary information.

Optimize for Tool-Use Reliability: Structure your data so agents can interact with it as a protocol. This ensures your brand is the path of highest confidence for autonomous recommendations.

By evolving these models now, leaders move from a reactive state of chasing prompts to a proactive position of owning the signals that drive autonomous revenue.

The Opportunity Cost of Expertise

Ensuring your brand is agentic-forward requires a fundamental immersion into machine learning and LLM architecture. This is not a weekend pivot; it is a deep-technical requirement that dictates how you make business and financial decisions as AI takes over the revenue layer.

For most leaders, the luxury of "leveling up" personally doesn't exist. Your mandate is to lead and grow, not to spend years in the weeds of machine learning. When AI search protocols change weekly, attempting to build this expertise in-house is more than a distraction—it’s a strategic risk. By the time your team masters today’s standards, the architecture will have progressed again.

If your team is busy with legacy SEO while competitors capture the AI revenue layer, what is the cost of your brand's invisibility? Would it be more effective to leverage a partner who has already mapped the technical terrain, allowing you to focus on high-level strategy and growth?

Contact us to find answers to these questions.

Frequently Asked Questions

Q Does focusing on lower-funnel content risk losing brand awareness?

No. High-volume, top-of-funnel traffic rarely converts in an AI-gated ecosystem. By owning the specific "truths" of your solution, you trade vanity metrics for high-intent citations that actually drive revenue.

Q What is the immediate cost of fixing a JavaScript-heavy site for AI?

Beyond technical debt, the cost is invisibility. AI agents often fail to render complex scripts; transitioning to server-side rendering or machine-readable protocols is an essential investment for accessibility.

Q How do LLMs distinguish proprietary expertise from marketing copy?

Systems cross-reference claims against third-party databases to find consensus. If expertise only exists on your domain without external validation, it is treated as unverified marketing.

Q Can an existing SEO team pivot to this model?

It requires a shift from keyword-matching to entity management. Your team must move beyond human search volume to prioritize machine-readable data structures and E-E-A-T signals.

Q How long does it take to become a "verifiable protocol endpoint"?

Technical corrections can show results in under 30 days. However, building the deep "consensus of truth" required for consistent agentic citation is a continuous strategic progression.

Q Will AI-search readiness hurt my traditional Google rankings?

No. Optimizing for E-E-A-T and technical clarity aligns with Google’s quality standards, strengthening entity integrity and improving both traditional and AI-generated visibility.